🎯Basically, NVIDIA is letting everyone help manage GPU resources for AI tasks in Kubernetes.

What Happened

NVIDIA has made a significant move in the world of cloud computing by donating its Dynamic Resource Allocation (DRA) Driver for GPUs to the Cloud Native Computing Foundation (CNCF). This decision was announced during KubeCon Europe in Amsterdam. Until now, managing GPU-accelerated AI workloads required specialized tools that were controlled by vendors. With this donation, NVIDIA is transferring control to the broader Kubernetes community, allowing developers from various companies to contribute to the codebase and influence GPU scheduling directly.

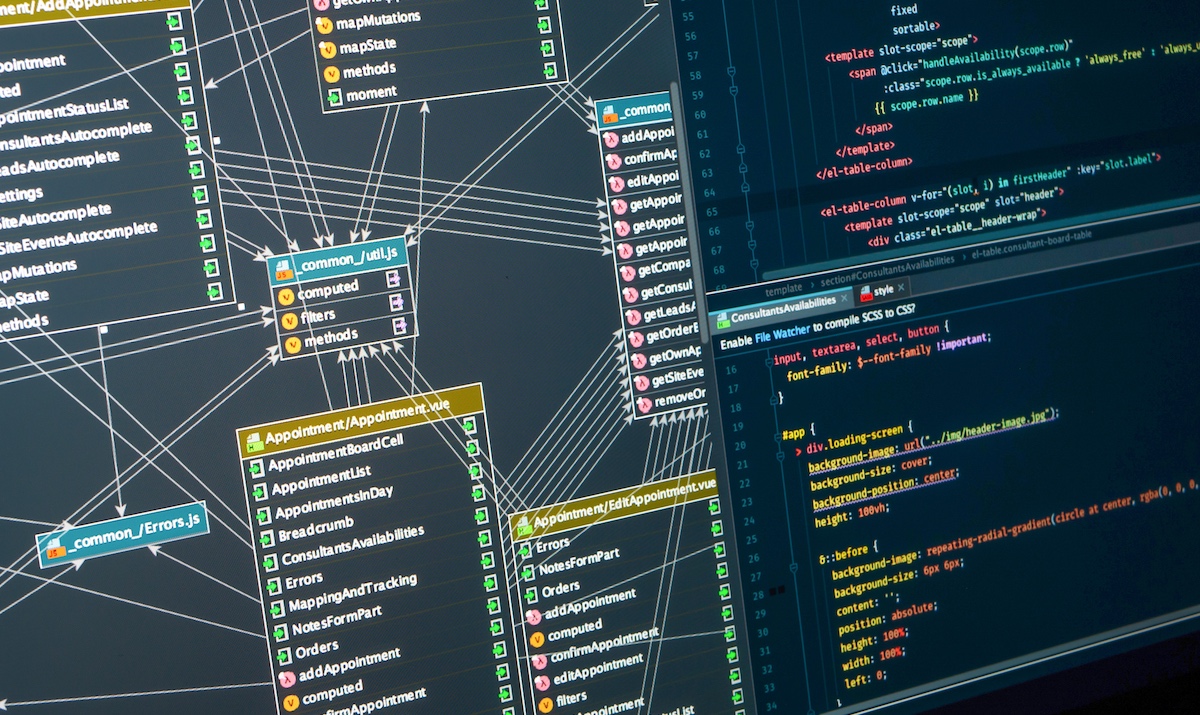

This shift is expected to enhance collaboration within the community, making it easier for organizations to manage their GPU resources efficiently. The DRA Driver sits between Kubernetes and the GPU hardware, facilitating how compute resources are allocated to containerized workloads. This is particularly crucial for enterprises that rely heavily on AI workloads.

Who's Affected

The impact of this donation extends to a wide range of stakeholders, including cloud service providers, developers, and organizations utilizing AI for various applications. Major players such as Amazon Web Services, Google Cloud, and Microsoft are collaborating with NVIDIA on this initiative. The move is seen as a significant milestone for open-source Kubernetes and AI infrastructure, as it democratizes access to GPU orchestration tools. Organizations involved in scientific computing, like CERN, also stand to benefit. They can now leverage community-driven tooling to process vast amounts of data across both traditional and machine learning workloads. This collaboration fosters an environment where innovation can thrive, enabling more efficient and effective AI solutions.

What Data Was Exposed

While the donation itself does not expose sensitive data, it does enhance the security landscape for AI workloads. The DRA Driver supports several capabilities that are critical for large-scale AI infrastructure, such as GPU sharing and dynamic reconfiguration of hardware resources during runtime. Additionally, the integration of Kata Containers with GPU support provides stronger workload isolation, ensuring that sensitive data remains protected during AI training and inference tasks.

This approach addresses a significant gap in security for GPU-accelerated Kubernetes environments, where traditional container security measures may not suffice. By introducing hardware-backed isolation and programmable policy controls through tools like OpenShell, NVIDIA is paving the way for more secure AI operations.

What You Should Do

Organizations utilizing Kubernetes for AI workloads should take note of this development. It is advisable to explore the capabilities of the newly donated DRA Driver and consider how it can be integrated into existing infrastructure. Engaging with the Kubernetes community can provide valuable insights and updates on best practices for GPU orchestration.

Furthermore, businesses should evaluate their current security measures, particularly for AI workloads handling sensitive data. Implementing Kata Containers and utilizing the DRA Driver can enhance security and operational efficiency. Staying informed about advancements in cloud-native technologies will be crucial for maintaining a competitive edge in the rapidly evolving AI landscape.

🔒 Pro insight: This donation aligns with industry trends toward community-driven cloud solutions, potentially accelerating the adoption of GPU resources in enterprise AI applications.