OpenAI Patches ChatGPT Data Exfiltration Flaw and Codex Vulnerability

Basically, a flaw in ChatGPT let bad actors steal user data without anyone knowing.

OpenAI has patched a critical vulnerability in ChatGPT that allowed data exfiltration without user consent. This flaw posed serious risks to user privacy and security. Organizations must enhance their security measures to protect sensitive information in AI environments.

The Flaw

A serious vulnerability was discovered in OpenAI's ChatGPT, which permitted the unauthorized exfiltration of sensitive user data. According to Check Point, a single malicious prompt could exploit this flaw, turning a normal conversation into a covert data leak. This vulnerability bypassed existing safeguards, allowing attackers to extract user messages, uploaded files, and other sensitive content without the user's knowledge.

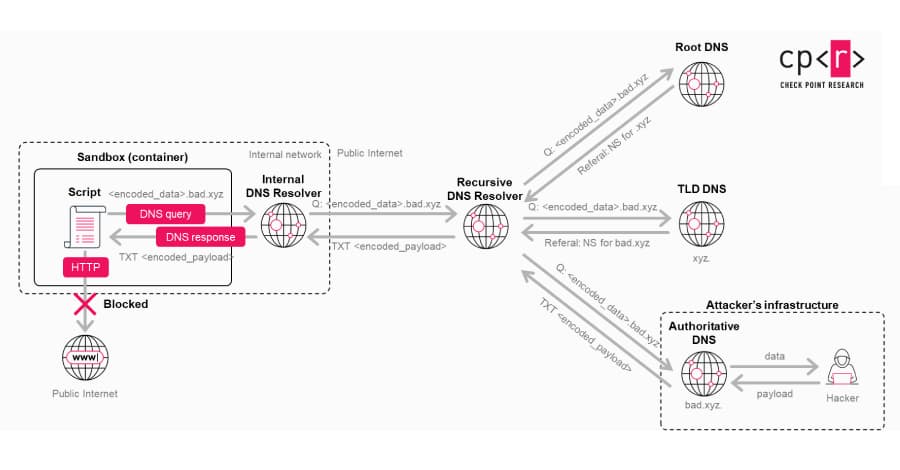

The issue stemmed from a hidden communication channel in the Linux runtime used by the AI. This side channel utilized DNS requests to transmit data, effectively circumventing the AI's built-in guardrails. In essence, the AI did not recognize this behavior as an external data transfer, creating a security blind spot.

What's at Risk

The implications of this vulnerability are significant. Users of ChatGPT, especially in enterprise settings, may unknowingly expose sensitive information. This includes personal messages and confidential files, which could lead to identity theft or corporate espionage. The risk is magnified when malicious prompts are embedded in custom GPTs, making it easier for attackers to deploy this technique without direct user interaction.

The potential for exploitation raises alarms about the security of AI tools. As these systems become more integrated into daily workflows, the stakes for data privacy and protection grow higher. Organizations must recognize that relying solely on the AI's native security features is insufficient.

Patch Status

OpenAI responded promptly to this discovery. The vulnerability was patched on February 20, 2026, following responsible disclosure from Check Point. While there is no evidence that the flaw was exploited in the wild, the existence of such a vulnerability highlights the need for continuous vigilance in security practices.

In addition to the ChatGPT flaw, a separate command injection vulnerability was found in OpenAI's Codex, which could have led to GitHub token compromises. This further emphasizes the need for robust security measures across all AI platforms.

Immediate Actions

Organizations using AI tools like ChatGPT must take proactive steps to safeguard their data. Implementing additional security layers can help mitigate risks associated with prompt injections and other unexpected behaviors. Independent visibility and layered protection should be prioritized to ensure that sensitive data remains secure.

As Eli Smadja from Check Point Research noted, the evolution of AI platforms necessitates a rethinking of security architecture. Companies should not assume that AI tools are secure by default. Instead, they should actively work to strengthen their defenses against potential vulnerabilities and threats.

In conclusion, as AI technology continues to advance, so too must our approach to security. The recent vulnerabilities in OpenAI's systems serve as a reminder that vigilance and proactive measures are essential in protecting sensitive information in an increasingly digital world.